AI Tutorial: Understanding the basics and ecosystem

Abstract

This article serves as the foundational entry in our Embedded AI Series. While traditional IoT relies on sending raw data to the cloud for processing, the paradigm is shifting. We are moving toward AIoT (Artificial Intelligence of Things), where devices process data locally.

This guide defines the core concepts of TinyML, explains why we need it, and breaks down the major software ecosystems available for microcontrollers today: Edge Impulse, STM32 X-CUBE-AI, NanoEdge AI Studio, and CMSIS-NN (via Arduino libs). By the end of this article, you will understand the “Three-Layer Architecture” of an embedded AI application and be ready to select the right tool for your first project.

1. Introduction to Embedded AI and the TinyML Revolution

1.1 The Shifting Landscape of the Internet of Things (IoT)

The rapid proliferation of connected devices, estimated to reach tens of billions in the coming years, has made the traditional centralized cloud-processing model increasingly unsustainable. Conventional IoT relies on sensors continuously streaming raw, high-frequency data (such as vibration, audio, or video) to remote servers for analysis. While functional for simple monitoring, this architecture presents three major roadblocks:

Network Constraint: Sending massive amounts of data places severe strain on wireless bandwidth and generates high transmission latency, making real-time decision-making impossible.

Energy Constraint: Radio transmissions (via Wi-Fi, cellular, or LoRa) are the single largest power drain on battery-operated devices, limiting operational life.

Privacy Constraint: Sensitive data, such as private voice commands or camera feeds, must travel over the internet, introducing significant security and privacy risks.

The need for immediate, localized data processing has catalyzed the shift toward Edge Computing, where the computation happens close to the source of the data.

1.2 Defining the New Paradigm: AIoT and TinyML

The integration of Artificial Intelligence (AI) capabilities directly into the IoT infrastructure is termed the Artificial Intelligence of Things (AIoT). AIoT represents a profound change: devices transform from mere data transmitters into intelligent, autonomous decision-makers. Instead of blindly sending every data point, an AIoT device analyzes the data locally and only transmits a meaningful result (an “inference”), such as “Anomaly Detected” or “Fan Broken.”

The specific field enabling this transformation is Tiny Machine Learning (TinyML). TinyML is not a single technology, but a specialized engineering practice focused on developing, optimizing, and deploying sophisticated Machine Learning (ML) models—specifically Neural Networks—onto severely resource-constrained hardware, namely 32-bit microcontrollers (MCUs) with limited flash memory (often under 512KB) and minimal RAM (sometimes as low as 32KB).

1.3 The Core Pillars of TinyML Success

The successful implementation of a TinyML application hinges on several core technical and conceptual pillars that this article will address:

Model Quantization: The necessary process of converting model weights and activations from high-precision 32-bit floating-point numbers to low-precision 8-bit integers. This step is mandatory to fit the “brain” onto the tiny memory footprint of an MCU.

Digital Signal Processing (DSP): The use of specialized algorithms (such as Fast Fourier Transforms or Spectrograms) to preprocess and extract meaningful features from raw sensor data, significantly reducing the computational burden on the Neural Network itself.

Hardware Optimization: Leveraging hardware-specific kernel libraries, such as ARM’s CMSIS-NN, which uses Single Instruction, Multiple Data (SIMD) instructions to execute matrix multiplication—the heart of a Neural Network—as efficiently as possible on the target microcontroller.

2. Prerequisites

Unlike standard firmware development, Embedded AI requires a mix of hardware knowledge and data science awareness.

Hardware

- 32-bit Microcontroller: Cortex-M4, M7, M33, or Xtensa LX6/LX7 (e.g., ESP32-S3, STM32, Arduino Pro, or Raspberry Pi Pico).

- Sensors: The “eyes and ears.” Accelerometers (IMU), PDM Microphones, Vibration sensors and many, many others.

- Computer: Capable of running Python scripts or browser-based training tools, such as Google Colabs.

Software Concept

- C/C++: For the actual firmware implementation.

- Python: The standard language for training Neural Networks (TensorFlow/PyTorch).

- DSP (Digital Signal Processing): Understanding how to clean signals before feeding them to AI.

3. Theory: What are AIoT and TinyML?

Before diving into the tools, we must define the environment.

AIoT is the fusion of AI capabilities with IoT infrastructure. Instead of a “dumb” sensor sending temperature data every second, an AIoT sensor might only wake up and transmit when it detects a specific anomaly or pattern.

TinyML is the specific field of engineering focused on running Machine Learning models on hardware with low power consumption and low memory density.

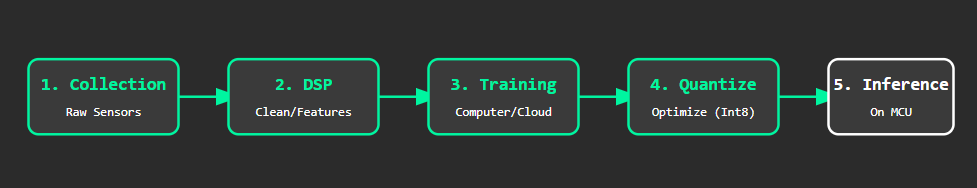

Diagram comparing classic cloud-based IoT data processing workflow versus the low-latency, low-power TinyML/AIoT edge processing approach:

Why move AI to the Edge?

- Latency: No network round-trip time. Decisions happen in milliseconds.

- Bandwidth: Streaming high-frequency vibration or audio data consumes massive data. Sending only the “inference result” consumes bytes.

- Privacy: Voice or camera data never leaves the device; only the processed result (e.g., “Person Detected”) is transmitted.

- Power: Transmitting data via Wi-Fi/LoRa consumes significantly more energy than computing that data locally on the CPU.

4. The Embedded AI Ecosystem

Just as you choose an IDE for coding, you must choose an Inference Engine or Workflow Tool for TinyML. Here are the industry leaders we will explore in this series.

A. CMSIS-NN (The Foundation)

Developed by ARM, this is not a user-facing tool but an optimized kernel library.

- Role: It maximizes the performance of Neural Networks on Cortex-M processors using SIMD (Single Instruction, Multiple Data) instructions.

- Usage: Most high-level tools (like TFLM or Edge Impulse) use CMSIS-NN under the hood to make your math run faster.

- ARM-software/CMSIS-NN: CMSIS-NN Library

B. Edge Impulse (The “DevOps” Platform)

Edge Impulse is currently the leading platform for accessible TinyML.

- What it is: A web-based end-to-end platform.

- Features: It handles data collection, Digital Signal Processing (DSP) configuration, Model Training, and deployment (C++ library export).

- Best for: Beginners and pros who want a visual interface for the entire pipeline.

- Edge Impulse – The Leading Edge AI Platform

C. STMicroelectronics X-CUBE-AI

If you are using the STM32 ecosystem, this is a powerful expansion pack for STM32CubeMX.

- What it is: A model converter and optimizer.

- Features: You bring a pre-trained model (from Keras/TensorFlow), and X-CUBE-AI converts it into highly optimized C-code specific to your STM32 chip. It provides detailed reports on RAM/Flash usage before you compile.

- X-CUBE-AI | Product – STMicroelectronics

D. NanoEdge AI Studio (STMicroelectronics)

A unique offering that differs significantly from standard Neural Networks.

- What it is: An automated machine learning (AutoML) search tool.

- Key Differentiator: It specializes in Anomaly Detection and capable of On-Device Learning.

- Usage: Unlike others where you train on a PC and deploy to the MCU, NanoEdge libraries can actually learn what “Normal” looks like after being deployed in the field.

- NanoEdgeAIStudio | Software – STMicroelectronics

5. The TinyML Workflow

Regardless of the tool you choose, the development lifecycle (The “Sketch” logic) always follows these five steps.

1. Data Collection

Garbage in, Garbage out. You need to record data from your sensors that represents the events you want to detect (e.g., “Fan On”, “Fan Off”, “Fan Broken”).

2. Pre-Processing (DSP)

Raw data is noisy and large. We use DSP (FFT, Spectrograms, Moving Averages) to extract features. The MCU finds it easier to classify a “Frequency Peak” than a raw audio wave.

3. Training

The computer uses the features to adjust the weights of a Neural Network until it can mathematically separate your classes with high accuracy.

4. Quantization

This is critical for microcontrollers. We convert the model’s math from 32-bit Floating Point (heavy, slow) to 8-bit Integers (light, fast). This often reduces model size by 4x with minimal loss in accuracy.

5. Inference

This is the “Loop” function in your Arduino sketch. The MCU takes live sensor data, runs the math using the trained model, and outputs a probability (e.g., “98% sure the fan is broken”).

6. Series Recap & Architecture

This project (and series) integrates three core concepts: Physical Sensing, Signal Processing, and Inference Logic.

The TinyML Layered Architecture

The following table outlines how we will approach the upcoming projects:

Layer | Component | Role | Key Technology |

Input (Physical) | Sensors (IMU/Mic) | Captures real-world analog phenomena. | I2C / I2S / PDM |

Processing (The Brain) | DSP & Model | Cleans data and detects patterns (Inference). | CMSIS-NN / TensorFlow Lite / |

Application (The Logic) | Firmware Code | Acts on the result (Turn on LED, Send Alert). | C++ / RTOS |

Key Takeaways for this Series

- It is not Magic, it is Math: AI on microcontrollers is simply matrix multiplication. It is deterministic and debuggable.

- Data is King: You will spend 80% of your time collecting and cleaning data, and only 20% designing the model.

- Quantization is Mandatory: To fit a brain into a chip with 32KB of RAM, we must compromise on precision to gain efficiency.

- Tool Selection Matters:

- Use Edge Impulse for rapid prototyping and visual workflows.

- Use X-CUBE-AI if you already have a model and use STM32.

- Use NanoEdge for industrial anomaly detection without large datasets.